Unknown language [eml] - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Unknown language [eml] Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

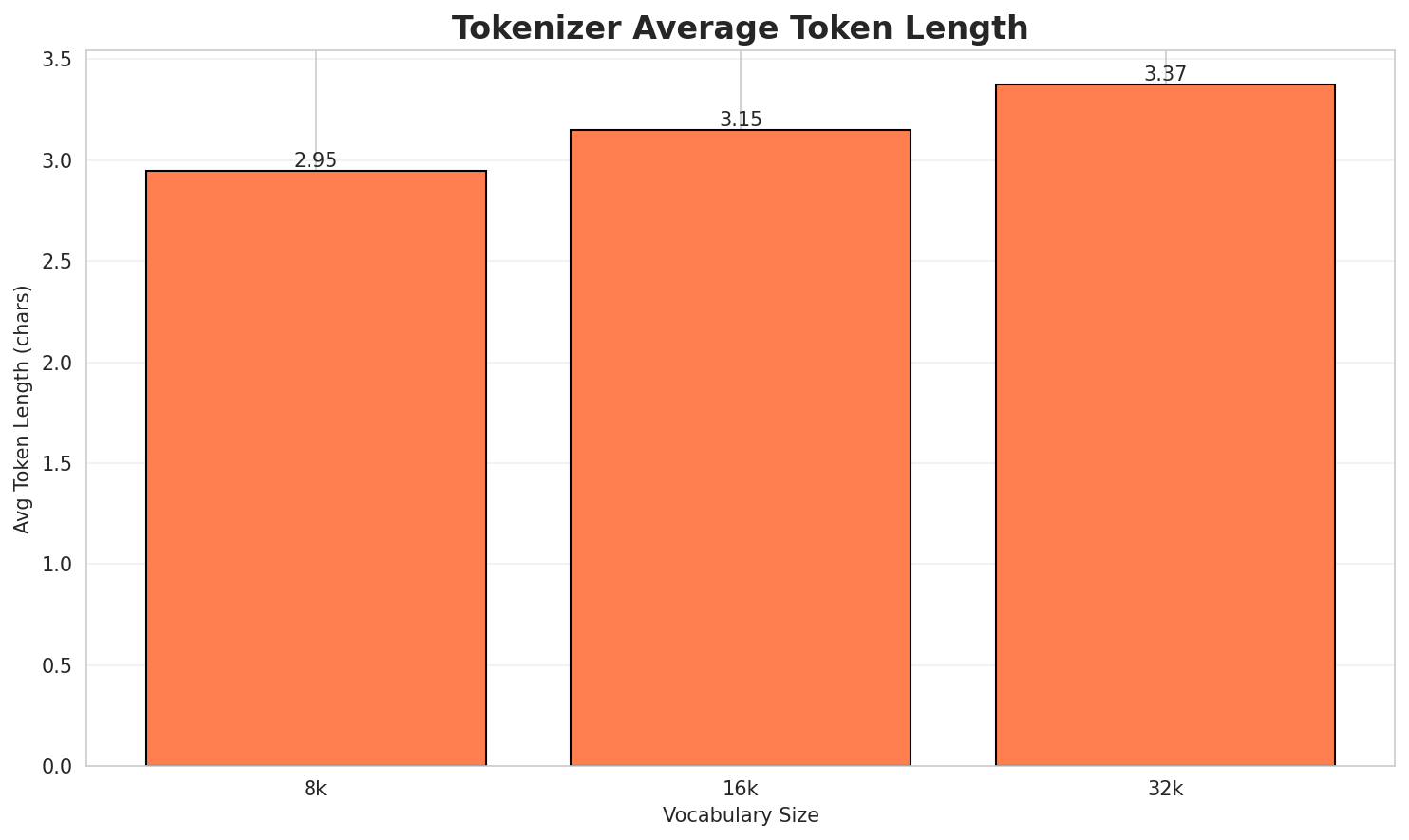

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 2.942x | 2.95 | 0.4433% | 289,426 |

| 16k | 3.144x | 3.15 | 0.4738% | 270,763 |

| 32k | 3.369x 🏆 | 3.37 | 0.5076% | 252,742 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: 'l è 'l nòm 'd un domìni genèric. Al funsiòuna da 'l zógn dal ed domìni tachê a ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

| 16k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

| 32k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

Sample 2: 'l è 'l nòm 'd un domìni genèric. Al funsiòuna da 'l setèmber dal ed domìni tach...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

| 16k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

| 32k | ▁' l ▁è ▁' l ▁nòm ▁' d ▁un ▁domìni ... (+17 more) |

27 |

Sample 3: Al 294 'l è 'n an edl III sécol dal Calendàri gregoriàn. Avenimèint Nê Mort III

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁al ▁ 2 9 4 ▁' l ▁è ▁' n ... (+12 more) |

22 |

| 16k | ▁al ▁ 2 9 4 ▁' l ▁è ▁' n ... (+12 more) |

22 |

| 32k | ▁al ▁ 2 9 4 ▁' l ▁è ▁' n ... (+12 more) |

22 |

Key Findings

- Best Compression: 32k achieves 3.369x compression

- Lowest UNK Rate: 8k with 0.4433% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

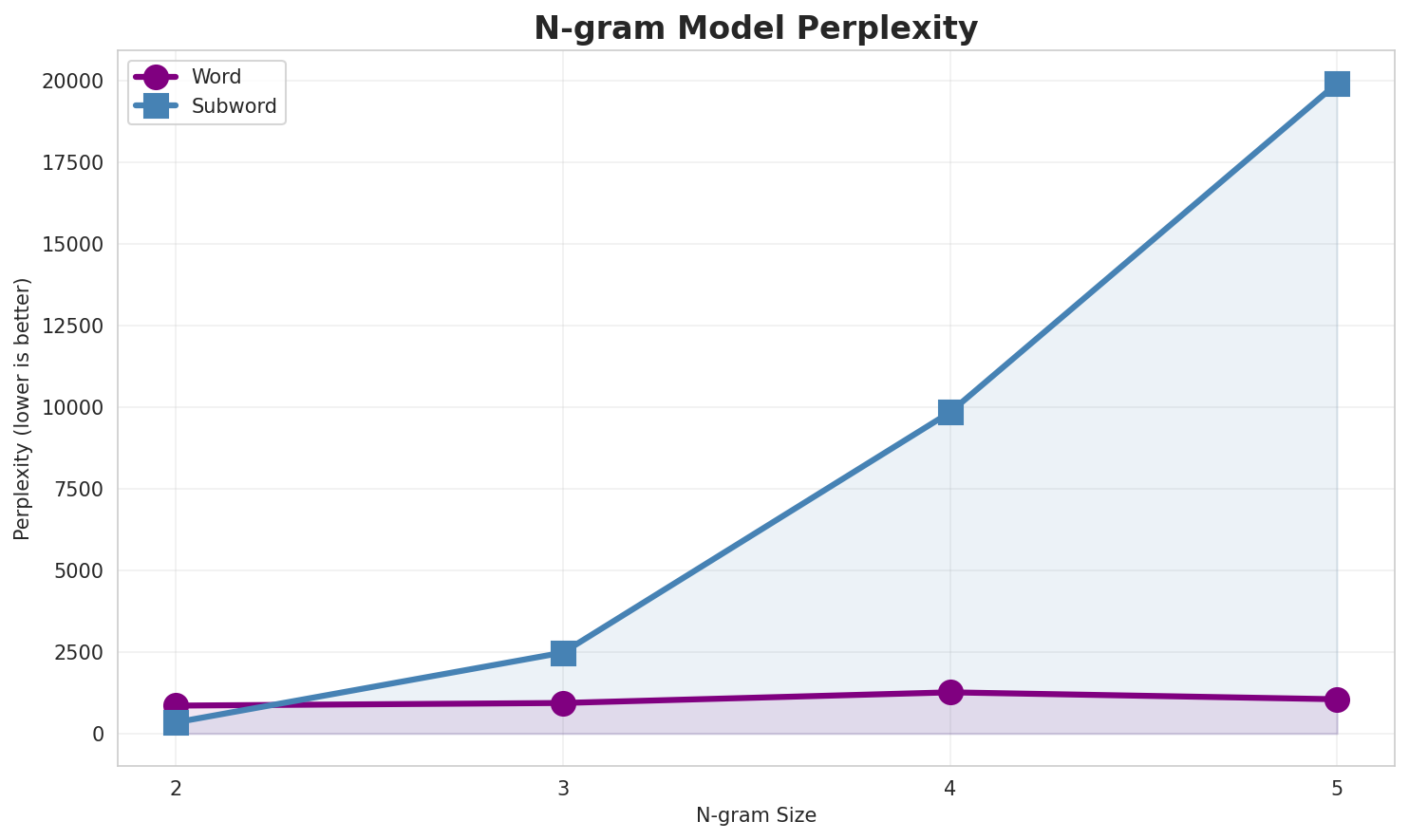

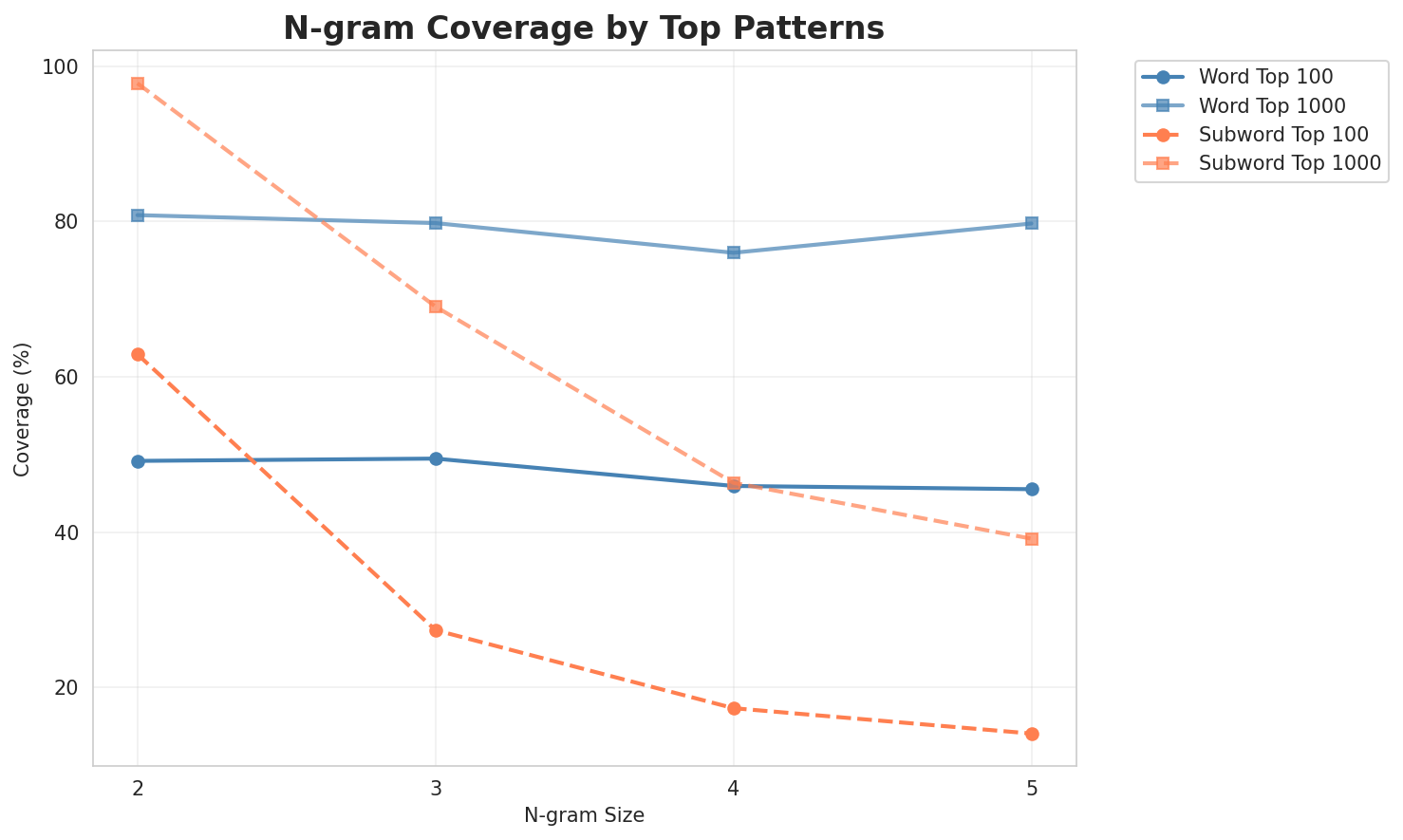

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 855 | 9.74 | 4,527 | 49.2% | 80.8% |

| 2-gram | Subword | 342 🏆 | 8.42 | 2,464 | 62.9% | 97.8% |

| 3-gram | Word | 936 | 9.87 | 6,071 | 49.5% | 79.8% |

| 3-gram | Subword | 2,480 | 11.28 | 17,300 | 27.4% | 69.1% |

| 4-gram | Word | 1,262 | 10.30 | 9,814 | 45.9% | 76.0% |

| 4-gram | Subword | 9,840 | 13.26 | 65,901 | 17.3% | 46.3% |

| 5-gram | Word | 1,050 | 10.04 | 7,194 | 45.5% | 79.7% |

| 5-gram | Subword | 19,916 | 14.28 | 117,450 | 14.0% | 39.1% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | l è |

4,349 |

| 2 | da l |

2,854 |

| 3 | d un |

2,584 |

| 4 | dal calendàri |

1,948 |

| 5 | è n |

1,667 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | l è n |

1,665 |

| 2 | dal calendàri gregoriàn |

1,584 |

| 3 | sécol dal calendàri |

1,575 |

| 4 | è n an |

1,575 |

| 5 | avenimèint nê mort |

1,412 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | l è n an |

1,575 |

| 2 | ed domìni tachê a |

1,255 |

| 3 | a funsionèr da l |

1,255 |

| 4 | domìni tachê a funsionèr |

1,255 |

| 5 | tachê a funsionèr da |

1,255 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | domìni tachê a funsionèr da |

1,255 |

| 2 | ed domìni tachê a funsionèr |

1,255 |

| 3 | tachê a funsionèr da l |

1,255 |

| 4 | l è l nòm d |

1,247 |

| 5 | l nòm d un domìni |

1,247 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ |

44,681 |

| 2 | l _ |

36,354 |

| 3 | _ d |

31,152 |

| 4 | _ a |

28,707 |

| 5 | n _ |

26,332 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a l _ |

19,233 |

| 2 | _ d a |

13,700 |

| 3 | _ i n |

10,014 |

| 4 | l a _ |

9,054 |

| 5 | d a l |

8,840 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d a l |

8,766 |

| 2 | d a l _ |

8,710 |

| 3 | _ a l _ |

7,884 |

| 4 | _ e d _ |

6,634 |

| 5 | _ l a _ |

5,983 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d a l _ |

8,679 |

| 2 | _ d a _ ' |

2,988 |

| 3 | ' l _ è _ |

2,975 |

| 4 | l _ è _ ' |

2,854 |

| 5 | d a _ ' l |

2,762 |

Key Findings

- Best Perplexity: 2-gram (subword) with 342

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~39% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

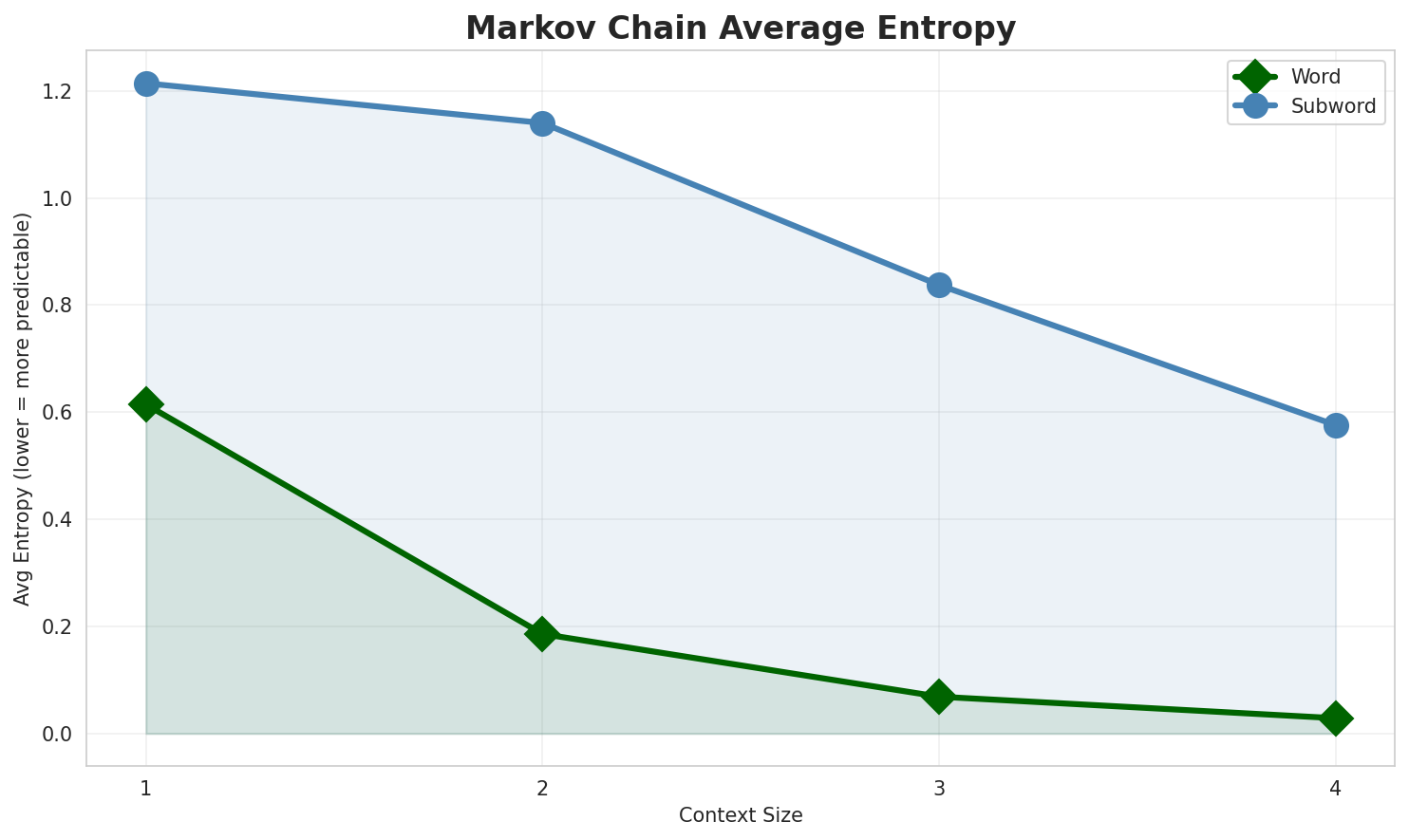

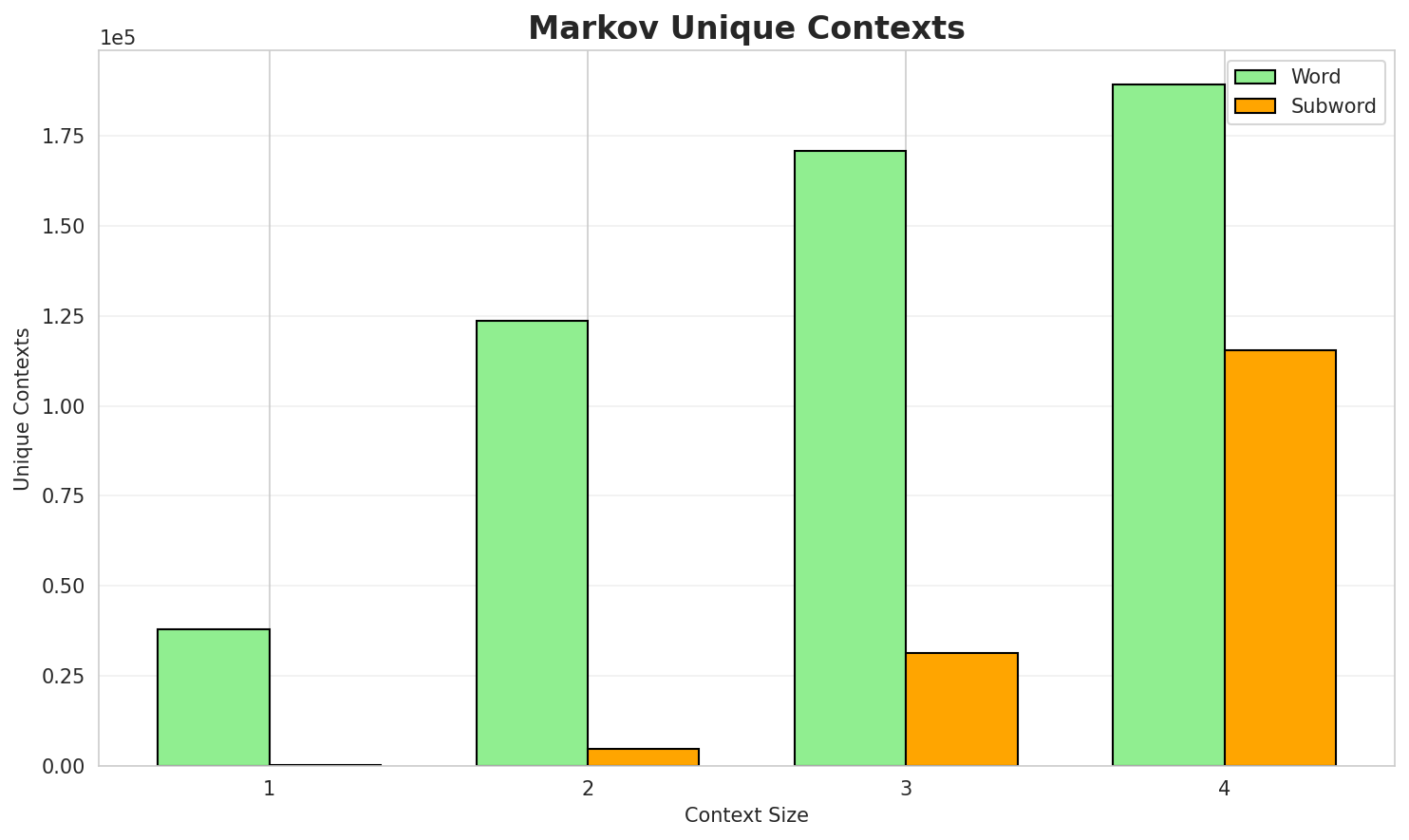

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.6144 | 1.531 | 3.27 | 38,079 | 38.6% |

| 1 | Subword | 1.2142 | 2.320 | 11.72 | 398 | 0.0% |

| 2 | Word | 0.1859 | 1.138 | 1.39 | 123,729 | 81.4% |

| 2 | Subword | 1.1401 | 2.204 | 6.72 | 4,661 | 0.0% |

| 3 | Word | 0.0688 | 1.049 | 1.12 | 170,769 | 93.1% |

| 3 | Subword | 0.8376 | 1.787 | 3.69 | 31,279 | 16.2% |

| 4 | Word | 0.0286 🏆 | 1.020 | 1.05 | 189,112 | 97.1% |

| 4 | Subword | 0.5759 | 1.491 | 2.30 | 115,443 | 42.4% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

l é al progrâma pc 12 518 519 520 gonèl 22 ed domìni genèric al urèlal funsiòuna da per 4 quèśi prim sfènic difetìv 322 in sensu laudator temporis acti prudentesdal crìst 4 d oro una cumêdia d antonino inferito da l è l è l

Context Size 2:

l è n an dal vii sécol dal calendàri gregoriàn avenimèint nê guélf vi mort xiid un nùmer triangolèr moltìplica per 5 d un nùmer quèder moltìplica per 3 d un domìnidal calendàri gregoriàn avenimèint nê mort x

Context Size 3:

l è n an edl iii sécol dal calendàri gregoriàn avenimèint nê mort idal calendàri gregoriàn avenimèint nê mort viiiè n an edl viii sécol dal calendàri gregoriàn avenimèint nê mort xvi

Context Size 4:

l è n an edl ix sécol dal calendàri gregoriàn avenimèint nê mort vdomìni tachê a funsionèr da led domìni tachê a funsionèr da l

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_pe_gotili_l'n_iandogrin_menèiṣai_incōridl_stêst

Context Size 2:

a_cuns_e_tòra_fiōl_séco,_ed_unèli__drê_avōl_è_'l_59

Context Size 3:

al_sît_la_cà_paolo_da_63_in_difestìl_in-dóvv_a_un_di_c

Context Size 4:

_dal_calendàri_gregdal_viii_sèc._préma_al_funsiòuna_da_'l

Key Findings

- Best Predictability: Context-4 (word) with 97.1% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (115,443 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 14,744 |

| Total Tokens | 272,012 |

| Mean Frequency | 18.45 |

| Median Frequency | 3 |

| Frequency Std Dev | 223.57 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | l | 12,992 |

| 2 | al | 10,267 |

| 3 | dal | 8,736 |

| 4 | a | 7,317 |

| 5 | ed | 6,740 |

| 6 | la | 6,622 |

| 7 | d | 5,491 |

| 8 | in | 5,032 |

| 9 | è | 4,792 |

| 10 | da | 4,480 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | espositìv | 2 |

| 2 | ecosistèma | 2 |

| 3 | trasformasiòun | 2 |

| 4 | galleria | 2 |

| 5 | space | 2 |

| 6 | velò | 2 |

| 7 | arriv | 2 |

| 8 | sèda | 2 |

| 9 | cumé | 2 |

| 10 | zûg | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0159 |

| R² (Goodness of Fit) | 0.990784 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 57.7% |

| Top 1,000 | 77.8% |

| Top 5,000 | 90.7% |

| Top 10,000 | 96.5% |

Key Findings

- Zipf Compliance: R²=0.9908 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 57.7% of corpus

- Long Tail: 4,744 words needed for remaining 3.5% coverage

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.3584 | 0.4391 | N/A | N/A |

| mono_64d | 64 | 0.1134 | 0.4504 | N/A | N/A |

| mono_128d | 128 | 0.0166 | 0.4596 | N/A | N/A |

| aligned_32d | 32 | 0.3584 🏆 | 0.4411 | 0.0140 | 0.1660 |

| aligned_64d | 64 | 0.1134 | 0.4292 | 0.0460 | 0.2440 |

| aligned_128d | 128 | 0.0166 | 0.4457 | 0.0400 | 0.2640 |

Key Findings

- Best Isotropy: aligned_32d with 0.3584 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.4442. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 4.6% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 1.037 | High formulaic/idiomatic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-ca |

cal, cavésin, caviân |

Productive Suffixes

| Suffix | Examples |

|---|---|

-a |

scōla, algebra, câṣva |

-um |

coelum, adsum, 217śum |

-na |

vègna, teresina, ruvîna |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

asiò |

1.80x | 17 contexts | asiòṅ, asiòun, frasiòn |

siòu |

1.79x | 17 contexts | asiòun, sesiòun, lesiòun |

purt |

1.55x | 23 contexts | purtâ, purtê, purtä |

iòun |

1.73x | 16 contexts | uniòun, asiòun, sesiòun |

nter |

1.50x | 24 contexts | inter, nterra, dänter |

sèin |

1.51x | 17 contexts | sèins, sèint, casèin |

tèin |

1.48x | 16 contexts | latèin, estèin, putèin |

ital |

1.53x | 14 contexts | italy, italo, vitali |

tôri |

1.78x | 9 contexts | stôri, stôric, stôria |

rèin |

1.46x | 14 contexts | rèina, trèin, terèin |

inte |

1.59x | 11 contexts | inter, intern, interès |

mèin |

1.79x | 8 contexts | mèint, camèin, mumèint |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-ca |

-a |

53 words | cavacürta, canpâgna |

-ca |

-na |

16 words | canpâgna, catalógna |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| cascaggna | ca-scagg-na |

3.0 | scagg |

| califòrgna | ca-lifòrg-na |

3.0 | lifòrg |

| campàggna | ca-mpàgg-na |

3.0 | mpàgg |

| castlaran | ca-stlaran |

1.5 | stlaran |

| philosophum | philosoph-um |

1.5 | philosoph |

| privilegium | privilegi-um |

1.5 | privilegi |

| calandäri | ca-landäri |

1.5 | landäri |

| referendum | referend-um |

1.5 | referend |

| metropolitana | metropolita-na |

1.5 | metropolita |

| carabinieri | ca-rabinieri |

1.5 | rabinieri |

| parmigiana | parmigia-na |

1.5 | parmigia |

| funsiòuna | funsiòu-na |

1.5 | funsiòu |

| carpigiano | ca-rpigiano |

1.5 | rpigiano |

| caraterésstic | ca-raterésstic |

1.5 | raterésstic |

| indipendentîxum | indipendentîx-um |

1.5 | indipendentîx |

6.6 Linguistic Interpretation

Automated Insight: The language Unknown language [eml] shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

Note on Idiomaticity: The high Idiomaticity Gap suggests a large number of frequent multi-word expressions or formulaic sequences that are statistically distinct from their component parts.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 32k BPE | Best compression (3.37x) |

| N-gram | 2-gram | Lowest perplexity (342) |

| Markov | Context-4 | Highest predictability (97.1%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-04 14:33:51